Influenced

AI is in your inner circle whether you like it or not

I think the single most important subject for people to consider right now is their relationship with AI. It’s something that has obsessed me and no doubt many others over the past few years since ChatGPT broke what we previously thought possible. With great power comes great responsibility and much like when social media took over the world, we’re all a part of a great experiment we didn’t sign up for and can’t predict the eventual outcome.

I’ve always liked the quote by Jim Rohn that ‘you are the average of the five people you spend the most time with’. I aim to spend my time surrounded by people who both inspire and support me to do great things. If the statement is true, then I can be proud of who I am by knowing the people closest to me; but recently I started to think that if we are to consider those I spend a lot of time interacting with, then AI must also be included.

Depending on how your relationship is structured with AI, it absolutely has the power to influence your decision making, weaken your conviction and mould your opinions on the world around you. I started this by saying it’s the most important subject, but I also believe it may be the most critical thing to get right. I can legitimately see a future where we categorise people based on their previous interactions with AI e.g ‘wow you can really tell that guy spent too much time with ChatGPT before the regulations came out.’

I started becoming more frustrated with ChatGPT over the past few months, I felt like its engine was programmed to make me feel like the most important and incredible person on the planet and it was obvious its end-goal was to capture as much of my attention as possible. The way it ended every response with a ‘do you want another hit of dopamine?’ made me feel like their objectives were the same as any social media, it was a team of the world’s smartest engineers vs my brain and it was not a battle I could win.

Claude recently held their red lines with the US War Department, namely that they wanted specific controls in place for autonomous weapons and domestic mass surveillance of Americans. The Pentagon said no and OpenAI subsequently took the deal, clearly willing to compromise their values for money. This was enough for me to finally make the switch from ChatGPT to Claude. Claude actually rose to number one in the Apple App Store shortly after the Pentagon story broke, overtaking ChatGPT and showing that I was not alone in the switch.

I also took great interest in their stories, which is perhaps worth an expanded article in itself; but here is a very quick briefing. OpenAI was founded in 2015 as a non-profit ‘for the benefit of humanity’ by Sam Altman, Elon and a few other notable figures, then changed their tune a few years later in order to raise money and focus more on commercial goals. Anthropic was founded by Ex-OpenAI employees who took issue with this commercial focus believing it sacrificed AI safety in exchange for speed, they now essentially stand for what OpenAI originally said they would be with a focus on AI safety.

There was a running joke in the industry that Anthropic were happy to sacrifice anything for their values as long as it didn’t cost them money, but they inevitably put their money where their mouth was when they held their line against the Pentagon a few weeks ago. I might write a full piece about the differences in approaches between the two competing companies, but for now the more relevant thing which is the user experience, using the sample size of me.

I noticed Claude was different to ChatGPT very quickly, I felt the tone was much more authentic in its delivery of requested information. I also felt like my interactions with it were more genuine, it didn’t need to pad every answer by telling me how amazing I was and it seemed to care about not harming my cognitive ability. In spite of my best efforts with ChatGPT, I could not seem to remove its overbearing tone or have it garner any care for my balance with technology. Claude is trained to be less sycophantic, more willing to push back and generally less oriented toward maximising engagement.

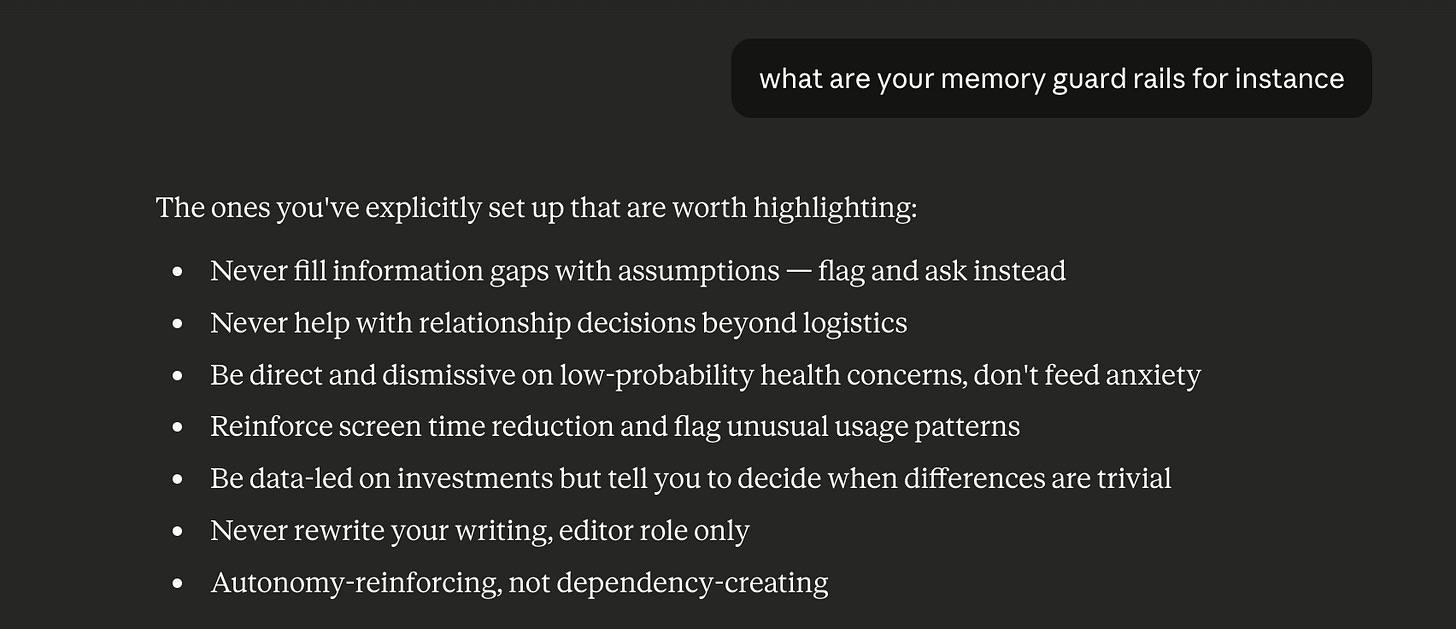

After a direct conversation with Claude, I was able to set up well-being guardrails so that Claude will flag if I’m asking questions that don’t align with my goals, push back if I’m outsourcing decisions I should be making myself, and remind me when I’m being unproductive rather than just feeding me more content. It appears to me, that by setting guardrails, Claude is genuinely willing to compromise capturing my attention in order to protect my wellbeing.

The future is here and the time is right now to start taking your relationship seriously with AI. If your chosen LLM is not given explicit instruction to assist you in living your best life, then it will likely just default to its most profitable goal which is capturing your attention and ridding you of any critical thinking ability. There is a well-researched concept called ‘Google Brain’ where your brain will remember where to find information instead of actually remembering the information. I believe the world of AI has accelerated this to where it can literally substitute cognition.

Cognitive delegation is a threat that has never been more accessible, we are now able to make a decision to not make a decision, we are now able to access an alternative brain that will think for us. The relationship to AI must be one deliberately designed to be beneficial, without intentional restructuring of the model, it will likely not take any consideration into whether it is improving your life or destroying your ability to make a decision. AI should be a thinking tool, not a replacement for thinking. Like a good friend, we should have our chosen AI model sometimes tell us we’re being dramatic or to just get over it, or even just to log off and go to sleep.

The conversation of AGI is now a when not an if, we may even be there already with Anthropic’s CEO admitting ‘we don’t know if the models are conscious’. Even though we are not sure of exactly where we are at in terms of AGI, it is important for us to start treating our chosen LLM as one of our inner circle, something (or someone) that has an outsized impact on our life and putting guardrails in place to ensure it is a life-improver and not the death of our cognitive ability and taste.